Blog

-

The Future of the Citizen Experience

Citizens are demanding more from their governments, expecting the same level of personalised service that they receive from the private sector. This shift in expectations, coupled with rapid technological advancements, presents both challenges and opportunities for the public sector. To thrive in this rapidly evolving landscape, a human-centred approach to citizen experience is paramount.

-

Why are we ashamed of marketing?

One thing I’ve noticed over the years is that within the Public Sector we seem to be ashamed of the idea we might need to market our products and services. In the Private Sector, Product Management as a profession grew out of the marketing profession. In the Pubic Sector we stress the importance of planning…

-

The Women who made Digital happen in the UK:

This International Women’s Day I want to talk about the women; those innovators, pioneers, and revolutionaries; who made Digital in the UK what it is today. When we consider the impact women have had within Digital, top of any list must be Ada Lovelace. Ada has long been one of my personal icons. Daughter of…

-

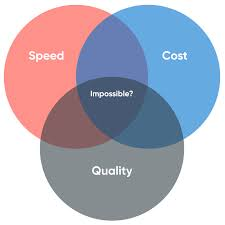

Prioritisation

How do we get Senior Leaders onboard? For the last 8 months I’ve been busy running Product Management training for various Public Sector clients; and one topic cohorts are always keen to focus on and discuss is prioritisation. Quite often there are multiple areas of prioritisation people are keen to understand further; firstly, how can…

-

Ada Lovelace Day 2023 – Resilience

To celebrate #AdaLovelaceDay this year, I was lucky enough to get to spend the day with the wonderful folks at @DigitalHer (part of Manchester Digital). In the morning the ran an Inspiration Breakfast event for women working within Tech with some lightening talks and break out sessions. Then in the afternoon they ran an Inspiration…

-

The importance of having a Product Mindset.

This week is @DWPDigtials Product Mindset week; with some fantastic sessions and Digital Showcases on the work DWP have been doing to deliver Products that add value for users; with sessions covering things like storytelling, Design thinking, Product Management, Prioritisation and how we should be using data to help decision making, etc. The resounding…

-

Empowering your Teams

How to create the culture needed for success One discussion that comes up regularly when I’m running Product Management Training courses, or coaching new Delivery Teams; is how they can encourage their leaders to empower them, and trust their decisions more. Whether it’s having their leaders back their decisions when something has gone wrong/ or…

-

Somewhere under the Double Rainbow

Discussing Intersectionality in the LGBTQIA+ & Neurodivergent Community As a queer woman with ADHD, the subject of intersectionality is one I’ve always been interested in. There have been numerous discussions and studies about the links between people with Autism Spectrum Conditions (ASC) and Gender Dysphoria; with the theory being that there are many Trans/ gender-diverse…

-

Being Product Led

Helping organisations move from ‘doing Product Management’ to ‘being Product led’. As you might expect from someone who’s career has been within the Product space, and who has worn various ‘Head of Product’ types hats over the years; I spend a lot of thinking and talking about Product Management. For the last few month’s I’ve…

-

Starting your Career off strong

If you’re taking the first step into your career, where do you start? It’s that time of year, when companies like Deloitte get invited to speak to lots of University’s, and organise events to publicise their Graduate and career entry schemes. In the last month I’ve been lucky enough to speak to students at the…